Issue #167 | The Questions CRO Doesn’t Want To Answer

Quick note before we get into it: this is the first of 2 issues on where most testing/CRO programs go wrong (this one) and what to do about it (Issue #168). Over the last decade, I’ve worked on hundreds of CRO projects and alongside dozens of consultants/agencies. Across those experiences, I started to notice a pattern – the brands/agencies that reaped massive rewards from their testing program approached it fundamentally differently than those that spun their wheels.

If you’ve ever felt like you’re doing all this testing but you can’t seem to get that breakthrough/breakout moment, this is the issue you should read. I’ve been there. The approach in this week’s issue is the result of years of mistakes, frustrations + lessons, all condensed into something like 4,000 words.

Let’s get to it.

A few years back, I was asked to audit an 8-figure brand spending ~$150k/month across Meta & Google. They felt their paid media operation wasn’t keeping pace with the other agencies they worked with (SEO, email, CRO) and wanted a second set of eyes. Nothing unusual. I figured it’d be a standard, run-of-the-mill review. But once I got into the accounts, the analytics & the website, hoo boy was I wrong.

Plot twist: the paid media agency was the least of anyone’s problems. In fact, they were doing a damn decent job. It wasn’t perfect, but the account was consistently performing AND driving a progressively higher percentage of sales each month. They were awful at reporting, but (I suppose) better for a paid media shop to be terrible at reporting and good at media buying, than terrible at media buying but good at reporting.

But all that raised a question: if the business was growing slowly (not ideal, but better than shrinking) and paid media was driving a progressively higher volume of sales and a larger share of total sales, then where was that share coming from? And – more importantly – why?

Since I’m on a bit of a Sherlock bender while flying back from Cape Town, I’ll give you the quick hits on everything else I found:

- SEO traffic was up, but conversions were down (CVR was WAY down)

- Direct traffic was flat(ish), conversions were down (CVR down)

- Paid media traffic was flat, conversions were up (CVR up)

- Referrals/Affiliates were up and conversions up slightly (CVR down barely)

- Site-wide (including organic social + some programmatic), traffic was up, conversions were up, CVR was ever-so-slightly up.

This is a brand that said they’d been investing in CRO for 18+ months. They raved about the diligence + rigor of their CRO program on the initial call. Yet…CVR was effectively flat? Something seemed off. This brand operated in an expanding category. There were no major negative shocks that would have wiped out even-below-average CRO gains (think: scandals, product issues, recalls, massively funded new competitors, the usual).

So…I asked for all the details on their CRO program.

What I got back was one of the most beautiful files I’ve ever seen. The team had run 47 tests in the prior 12 months (that’s basically 1 a week for a year, plus giving everyone off for Prime Day, Christmas + BFCM). Each one was logged and documented (impressive), they had verifiable hypotheses (we think this test will increase X metric by Y%) and even did a decent job of mitigating confounding variables + avoiding multicollinearity. If you just opened up the sheet or read the docs, it had the appearance of competence.

Their conversion rate increased from 2.3% to 2.5%. If you’re keeping track at home, that’s a 12% lift.

A full year of work. 47 tests. 100s of hours of design, dev, copywriting, QA. Well over $1M in media spend over that period, most of which directed traffic to pages running tests.

Something was clearly broken. You’d think the brand would stumble onto a few winning tests with durable results after a year. So, I started to comb through that beautiful file, line by line. I categorized each test. And slowly, but surely, a pattern emerged:

The brand didn’t have a testing problem. They had a test selection problem.

The entire sheet was full of “expected” tests – the ones that are easy to design, relatively fast to implement and unlikely to draw any objections from the brand or ruffle any of the other agencies’ feathers. That was problem #1. Problem #2 – the more serious problem – was the lack of connectivity from the specific tests in that sheet + the subsequent activities being done by the brand/other agencies.

Let me illustrate what I mean. There was a test where the logo bar was moved from midway down the homepage to directly below the hero section, next to the “buy” button featured offer. That test resulted in a material (~+18%) improvement in CVR on that page. Were any other LPs or PDPs changed? Nah. Did the email team take that learning (logos = validation/trust = we should include trust elements near our CTAs too) and apply it to their emails? Nope. Did the paid media team integrate logos into their creative? No more than usual. A test was run, a result was obtained, nothing was generalized.

When I zoomed all the way out, I found the brand was “winning” tests at a standard clip – about 25%. The problem was that the wins were both (a) clustered in low-impact areas and (b) never generalized or distributed, so those learnings could have a greater impact. When I asked about that, the CRO agency’s response was scientific: we didn’t test that – we tested X. The scope of X is limited to [wherever], so to claim that this test showed that [insert other agency/team here] should have done [whatever] is logically incoherent.

And technically, by the letter of the scientific law, they were right. If the goal was to get an article published in the Scientific Marketer, they’d have succeeded. But it wasn’t. The goal was to make the brand more money. And in that goal, they failed miserably.

To recap: every one of the individual tests, in isolation, was good. The execution of each test was exceptional. But the portfolio – taken together – was downright horrible. And, it turns out that 47 isolated tests, most in low-impact / low-risk areas, will produce exactly what you’d expect: 5-15% improvement in CVR over a year.

The question you’re probably wondering: why didn’t the brand notice? Well, they were test-blind. That’s like being snowblind, but instead of snow it’s a gorgeous testing database and a wonderfully precise analysis doing the blinding. Somewhere along the way, everyone lost sight of what really matters: improving the damn conversion rate + getting more sales.

The solution is to test differently. To do that, you need a framework that tells you both what to test and how big the swing should be.

The 2 Questions Every Test Has to Answer

The single most useful diagnostic question I’ve ever found for a testing program is also the most uncomfortable: for each test you’ve run, what was it for?

It sounds simple, but almost no one can answer it.

They’ll tell you the particulars of the test (“We tested adding social proof to the PDP.” “We tested a sticky CTA on mobile.”) They can tell you what the result was. Some can even tell you if they ran it once or multiple times. But very few people can tell you is what cognitive job the test was doing for the visitor – what question/pain the change was trying to answer, on behalf of which audience, at what stage of their decision process, with the expectation that resolving that question/alleviating that pain would produce the desired result (aka more sales, higher CVR).

That question – what is this test for? – is the foundation of any testing program. That sounds obvious when you read it, but look at the tests you’ve run lately. Do you have an answer for it? Without one, every test is a guess. A statistically rigorous guess, sure. But still a guess.

The answer is in two parts, and a successful testing program needs both:

The first is questions: which of the questions your audience has does the page/element/funnel (or whatever you call it) being tested target?

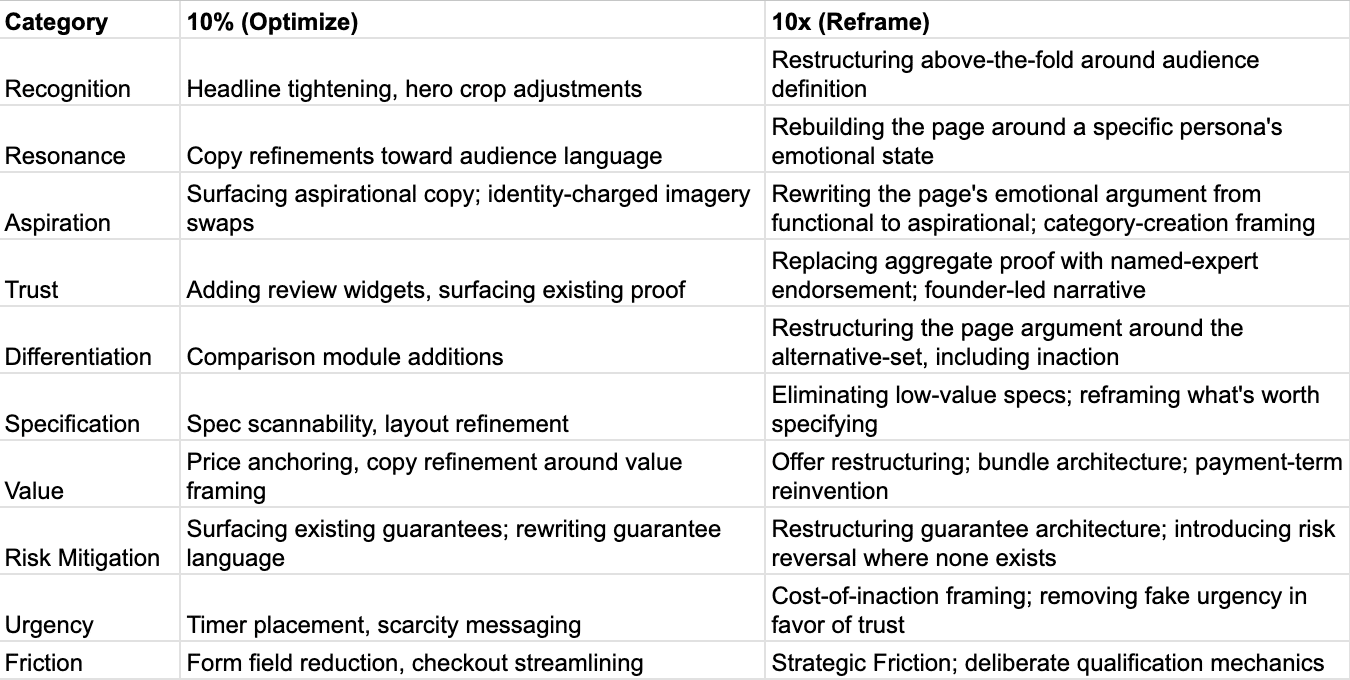

The second is magnitude: is this a 10% test, designed to incrementally improve a function that already works, or a 10x test, designed to fundamentally change what the function is or how it operates? (If the 10/10x distinction is unfamiliar, I wrote about it here. The short version is that every test you run should be categorized as either a small, incremental improvement on an existing system or a fundamental reinvention of one. Tests that fall into neither category are usually wasted spend.)

Almost no brands answer both questions. The few that answer one usually answer purpose, but do so poorly (which is why there’s limited connectivity between the tests run + the changes made following those tests). Almost no brand answers magnitude, because – candidly – a feature of any real 10x test is that it changes so many variables simultaneously that all scientific rigor goes out the window. Most CRO agencies/consultants don’t like that.

But – for the few groups that can and do answer both, the results are often incredible.

The 10 Questions

When we’re designing/building websites, we often begin with wireframes. Not because our creative team enjoys making them (believe me, they don’t), but because something magical happens when you strip away all the pretty pictures and nice fonts and fancy widgets from a page: you get a sequence of modules, designed to answer the questions a given audience segment is implicitly asking.

Each question answered well advances them toward the desired action. Any questions answered poorly (or not at all) provide a reason to leave.

There are 10 of these questions.

Every test you have ever run, every test you will ever run, addresses one or more of them. Sometimes a test addresses a single one. More often it addresses 2-3 at once, in which case the test is structurally compromised. You’ll get a result, but you won’t know which question the result was answering. That means the insight isn’t generalizable beyond that specific page in that specific context.

The questions, in roughly the order a visitor processes them, are:

1. Recognition: Am I in the right place?

This is the immediate question that must be answered near-instantly. The visitor arrives. They scan the hero section, maybe 1-2 modules lower with a quick swipe. In that instant, they’re deciding whether the page is even attempting to address the problem they came to solve. If that’s unclear, then every other question they might ask is moot because they’re gone. And even if you manage to keep them through some miracle, they’re going to be skeptical. Defensive. Suspicious. Looking at you the same way they look at a sales guy on a car lot.

Recognition is mostly determined by the hero section: headline, subhead, primary visual, the immediate context of the offer.

Tests in this purpose include:

- rewriting the headline to mirror the language of the source ad

- replacing a stock-aspirational hero with one that signals category specificity

- tailoring the hero section to the individual creator/influener featured in the ad

- restructuring the above-the-fold to lead with the audience definition rather than the product.

Wins here are often massive, because the cost of failing recognition isn’t trivial – it’s a step-function drop in anything below the fold ever seeing the light of day. The moment when a visitor arrives is (arguably) the most critical moment in the entire on-site experience – at that moment, you’ve done next-to-nothing to build trust or demonstrate your capability to help. Fumbling here is damn near impossible to recover from.

2. Resonance: Is this for someone like me?

Recognition answers whether the page is in the right category. Resonance answers whether it’s for the visitor specifically.

That might sound like a distinction without a difference, but it’s anything but.

A page for a senior living community can correctly identify itself as a senior living community in [whatever] market within 3s (recognition, check)…yet completely fail to resonate with the adult daughter doing the searching at 10:30 PM on a random Wednesday. The visitor doesn’t land on the page and think, “Oh, this is what I’ve been looking for” or “Wow, this community is different – they get it!”

This is where most CRO programs are weakest, because resonance requires a depth of audience understanding that most brands/agencies haven’t done the work to develop. The other challenge is you can’t test your way to resonance through copy variations alone. The underlying problem is usually that the team doesn’t actually know who the page is for, what the visitor fears, what language they use for their problem or what alternative they’re comparing the offer against.

Tests in this purpose tend to be high-impact when they’re done well:

- rewriting the page around a specific persona

- restructuring the messaging around the actual emotional state of the visitor

- surfacing the unspoken concern the visitor brought to the page in the language they would use to describe it themselves.

When you achieve resonance on a page, two things happen simultaneously: (1) the page stops feeling like marketing and starts feeling like recognition for the target audience (you’ll hear stuff like, “Wow, they get me” or “That’s unlike any page I’ve visited” if you ask your audience about it) AND (2) the page STOPS resonating with other audience segments.

That second part is where most people go wrong. If you’re sending a 50/50 split of 2 target audiences to a page, and resonance increases for 1…the test may show no lift at all. It might even show a decline if the increased resonance for segment #1 turns off segment #2. The way to mitigate that particular issue is to isolate the audience at the ad or email level first (email is easier to control).

3. Aspiration: Who do I become if I do this?

Resonance asks whether the page is for who your target audience is today (their current self); aspiration asks whether the offer is consonant with the version they want to become. That’s a different question, with a far higher lift potential when you actually answer it. Aspiration is the question that fitness coaches, GLP-1s and athleisure brands lean into with transformation pictures, fit/in-shape models, the “get your beach body in 60 days” messaging. That’s all aspiration. It’s appealing to the target audience’s desired version of themselves.

Ironically, aspiration is a question most performance-oriented CRO programs ignore, simply because it doesn’t show up in customer interviews or heatmaps or session recordings. It shows up in willingness-to-pay, in preference/time-to-purchase and in the visitor’s decision to share the brand/product/page with their friends.

Aspiration is what enables a luxury brand to charge 3x more than a competitor for the same functional benefit while converting at a higher rate. It’s what allows a category leader to maintain pricing power against low-cost / price-aggressive challengers indefinitely.

The underlying mechanism at work is identity. Every meaningful decision your target audience makes contains an implicit identity statement: they aren’t merely selecting a vendor or buying a product – they’re choosing a version of themselves that uses that product/service/whatever:

- Senior living: the family wants to be the kind of family that takes good care of mom

- Plaintiff law: the client wants to be the kind of person who fought back rather than rolled over

- B2B SaaS: the operator wants to be the kind of leader who runs a serious team with serious tools

- Apparel: the customer wants to be the kind of person who wears this brand

Tests here include:

- reframing the offer’s emotional center of gravity from functional to aspirational

- restructuring imagery and copy around the visitor’s desired self rather than their current problem

- surfacing identity-confirming social proof (the kind of person who buys this – not what other people said about it)

- integrating language that signals category membership rather than feature parity

Aspiration is where category-creation moves happen – the brand isn’t competing on better X, it’s defining what X means and inviting the visitor to align with that definition.

Aspiration tests are almost always 10x. The 10% version exists – surfacing aspirational copy in a more prominent position, swapping a generic hero for something with more identity charge – but the wins in this purpose come from rewriting the page’s emotional argument, not optimizing what’s already there. If your page is currently selling features and/or price, no aspiration optimization is going to turn it into a conversion engine – that only happens if you’re willing to rewrite the entire thing.

4. Trust: Is this real and legitimate?

Trust is the question that decides whether the visitor is willing to engage the offer at all, regardless of how attractive it looks. A compelling offer from an untrusted source isn’t compelling at all – it’s suspicious. Especially in today’s fraud-and-cybercrime-everywhere world, your audience’s (proverbial) Spidey senses are likely tingling.

Almost any visitor’s default state on any LP they’ve arrived at via paid media is mild skepticism: this might be a scam, are these guys legit, this might be a low-quality / questionable brand, what’s their angle, there’s probably a catch, I’m not sure I can trust this [brand/team/guy/gal].

If you don’t believe me, test it out yourself: ask a friend/significant other to scroll IG, click on a random ad for a brand s/he does not (don’t worry, IG will serve up plenty if you doomscroll for 5-10 minutes) and ask that person to narrate exactly what they’re thinking/feeling as they read/scroll the page. I’m willing to bet a buffalo nickel you’ll hear some variant of (at least) one of the above.

Trust is established through some combination of: social proof (reviews, testimonials, logos, press), credibility signals (founder presence, expertise demonstration, transparent operating history) and removing any disqualifying tells (terrible content, obvious stock imagery, contradictions between the ad and the page, too many “pressure” tactics).

Tests here include:

- integrating reviews into the body of the page rather than isolating them near the footer

- moving logos to the area right around the CTA

- adding client/customer testimonials (especially from other brands that specific audience segment is likely to love/trust)

- integrating tailored, hyper-relevant founder/executive content

- showcasing relevant awards/recognition

- displaying other forms of 3rd party credibility (press mentions, podcast features, etc.)

The truly interesting thing about trust is that it has asymmetric tests. Trust wins rarely produce dramatic lift on their own – most tests reduce variance rather than shift the mean. But (and this is the key part) the consequence of failing trust is a complete collapse of CVR regardless of how well every other purpose has been addressed. In other words: trust is the purpose where the floor matters more than the ceiling.

5. Differentiation: Why this and not something else?

Most LPs (and home pages, if we’re being honest) answer the question why this without also addressing the implicit corollary: and not the alternative. The visitor is comparing your brand, explicitly or implicitly, against 3 alternatives: a direct competitor, an indirect substitute and doing nothing. LPs that fail to address all 3 are leaving revenue/leads/sales on the table.

The most expensive differentiation failure is almost always the 3rd one: doing nothing. The visitor decides they’ll come back to it later, that this isn’t urgent, that they need to think about it more. That opens up Pandora’s Box – because Meta (and Google) suddenly assume that (a) that individual is IN-MARKET for [whatever] and (b) that clearly they aren’t satisfied with your brand, so IG should now stuff their feed with ads from every competitor under the sun. Even for an exceptional brand with a legitimately great product/service, that’s not a great outcome.

Tests targeting differentiation include:

- direct comparison tables (often more effective than brand teams expect, because the average visitor has not done the comparison the marketer assumes they have)

- explicit framing of the cost of inaction

- integration of competitor-anticipating language (“most options in this category do X — here’s why we don’t”)

- specific kinds of testimonials (i.e. the ones where the interviewee has first-hand experience with an alternative + speaks glowingly about your brand)

- Listicle-style pages (or sections) that seed questions in the visitor’s mind about competitors, alternatives or inaction

Differentiation tests are almost always 10x in nature when they’re done well, because the alternative-set framing usually requires restructuring the entire argument of the page rather than adjusting a single component.

6. Specification: What exactly am I getting?

Specification is the purpose most CRO agencies/teams over-test, because it has the most straightforward test design and the most defensible statistics. It answers the visitor’s concrete information requirements: what’s in the box, what’s in the service, what’s included/what isn’t, how big is the thing (aka will it fit here), what’s it made out of (is it stable/durable/high-quality), when will I get it, what will I get for this, how does this all get done, etc.

Specification matters, but it has a ceiling. A page that leans into the specification question but fails on any of the aforementioned questions (recognition, resonance, trust, or differentiation) will not convert well, because the info is being delivered to a visitor who has already mentally disengaged from the offer. That’s the error most teams make: assuming that specification is the thing people really want to know, when the reality is that it’s only relevant once the other questions have been satisfied.

Tests around specification include:

- placing specifications more prominently

- including explainers / how it works / process diagrams on the page

- restructuring spec layouts to make them easier to understand/scan

- replacing longer text descriptions with visualizations or comparisons

- eliminating specifications the visitor doesn’t care about & replacing with the ones they do

The final one (removing/eliminating some specifications) is often a 10x test, because it requires wholesale changes to the page structure, content + (in some cases) the offer.

7. Value: Is it worth the price?

Value decides whether the offer feels like a good deal. It is not, primarily, a question about price – though it can be in price-sensitive categories. More often, it’s a question about the perceived ratio between cost and the expected benefit, where both terms are constructed by the page rather than the price tag.

The reason most “price tests” fail to move conversion meaningfully is that they’re operating on the wrong side of the equation. Lowering the price changes the cost. The far higher-leverage move, in most categories, is changing the perceived value through framing. That can take many forms, including: (i) anchoring against a higher-priced alternative, (ii) shifting from price-per-unit to value-per-month; (iii) highlighting benefits the visitor wasn’t thinking about, (iv) changing the offer structure via choice engineering or bundling (i.e. adding a dummy option).

Tests here include:

- offer restructuring (10x)

- price anchor placement (10%)

- guarantees (varies – a money-back guarantee is 10%; a “we’ll keep working until you’re satisfied” guarantee with explicit success criteria can be a 10x)

- AOV mechanics like bundles, gifts-with-purchase, charitable donations with purchase (like bonobos giving away a pair of socks for every one you buy), tiered packages (usually 10x)

The brands that answer the value question well treat it as a structural problem in offer design rather than a copy / image / A+ content challenge.

8. Risk Mitigation: What happens if I’m wrong?

Let’s assume your visitor has decided the offer is legitimate, for them and of sufficient value…yet they still haven’t moved forward.

What’s the issue?

Most marketers jump to friction – something must be broken. The experience must suck. The checkout is too long. The form is messed up. Sometimes, that’s right. But, most times, it’s something else entirely: the unspoken question every economically rational buyer asks before parting with money or information: what’s the downside if this turns out to be a mistake?

Risk mitigation answers that question.

Money-back guarantees, free trials, cancel-anytime policies, free shipping, free returns, performance guarantees, warranties, satisfaction commitments, “we don’t bill until you’re happy” payment structure – all of it falls into this category. There’s a reason airlines dedicate massive amounts of prime real estate to trip insurance and 24-hour cancellations – because it works!

But the flip side is also true: if the presence of risk mitigation elements often increase the conversion rate on a page, their absence can tank it, all via the same mechanism. If your visitor has no idea how s/he is protected if this is wrong, the natural response is to assume the worst…which is often a conversion killer.

Candidly, this is one of the most under-tested and highest leverage questions in CRO. The reason most teams under-test it is the same reason they under-test most 10x tests: it requires operational changes, not just creative changes. A “30-day money-back guarantee” test isn’t really a copy test – it’s a refunds-policy test masquerading as a copy test. The brand has to actually be willing to honor what the page promises. Getting to that point often requires buy-in with multiple other groups (CFO, CEO, COO) – which also comes with the downside of those same people paying a lot of attention to the test.

Tests here include:

- surfacing existing guarantees more prominently (10%)

- rewriting guarantee language from legalese to confident operator (10%)

- restructuring the guarantee itself (changing duration, conditions, mechanics, criteria (10x))

- adding meaningful risk mitigation

- where none currently exists (10x).

This is the marketing equivalent of T Mobile’s “uncarrier moves” (i.e. getting rid of contracts, making bills transparent, etc.) – changes that require competitors to restructure offers simply to maintain relevance or compete.

One note on the trust vs. risk mitigation boundary: trust answers “is this brand legitimate?” Risk mitigation answers, “What’s my downside if I’m wrong?” A visitor can fully trust a brand and still hesitate, because the downside of a mistake feels overwhelming or catastrophic. Conversely, a visitor can have low trust but still convert if the risk mitigation is strong enough to absorb the consequence of being wrong. They’re related – higher levels of trust reduces perceived risk, and improved risk management builds trust through implied confidence. Thus, they’re similar but distinct questions that must be tested separately.

9. Urgency: Why do I need to do the thing now?

Urgency is the question most marketers instinctively push first, and the one most often misused.

Most “urgency” implementations – think countdown timers, “only X left in stock” widgets, this deal expires in Y days, whatever other generic scarcity messaging you can think of – pattern-match to the fake urgency your audience has developed visceral aversion to. Sure, some of these tests do convert at the margins, but they erode trust in proportion to the conversion lift, which means the long-term effect is often (at best) a wash and (at worst) a net-loss.

Real urgency comes from one of 3 sources: legitimate scarcity (this product/service is genuinely limited), legitimate timing (this offer genuinely changes on a date the visitor can verify) and/or the cost-of-inaction framing introduced under differentiation (the problem the visitor is trying to solve is getting worse while they delay). The final one is often the most effective and the least used – likely because (as with differentiation + resonance) it requires truly understanding the target audience.

Tests that address this question include:

- surfacing the cost of waiting in concrete terms (most effective in lead gen and considered purchases)

- redesigning offer windows to align with legitimate operational realities (i.e. launching a new product line; aligning promos to common “moments” like home-buying season, graduation or back-to-school)

- turning off / turning away customers once a threshold has been reached (a great example of this is product drops, where you’ll get a message that you missed out on this one and check back whenever – there’s no better way to train a customer that you’re being serious than to demonstrate just how serious you are

- removing fake urgency that’s suppressing trust

The final 2 are both counterintuitive tests that most brands won’t run, because they assume removing scarcity messaging will hurt. The data tells a different story. In ~50% of the accounts I’ve seen it tested in, that doesn’t happen because the trust gain offsets the urgency loss. That’s a 10x finding hiding inside a 10% test.

10. Friction: How easy is it to act?

Friction is the purpose most CRO programs over-test in raw volume but under-think strategically. It covers everything mechanical between the visitor’s intention to act and the completion of that action: form fields, page speed, checkout process, mobile experiences, error handling, payment options, account creation, all of it. Basically – anything that stands between your visitor deciding they want what you’re offering and them giving you money/information falls into this category.

While reducing friction matters, it’s worth noting that friction has the lowest ceiling of any question on this list, because the relationship between friction reduction and conversion lift is both sublinear and asymptotic. A checkout that converts at 65% can be optimized to 70%. No amount of work will take it to 95%. There’s a structural limit on how much friction reduction can improve any mechanical process (which is also true in nature).

The other thing about friction that most brands/agencies miss: not all friction is bad. There’s an entire body of behavioral economics – the IKEA effect, the endowment effect, commitment escalation – that demonstrates how strategic friction (asking the user to invest cognitive or physical effort before the conversion event) increases post-conversion engagement, retention and/or willingness to pay. The frictionless experience that maximizes top-of-funnel CVR often produces lower-quality customers. That’s a tradeoff most CRO agencies don’t even acknowledge, let alone test (I went deeper on this in Issue #145, if you want the full argument)

Tests addressing this question tend to be 10% by their nature:

- incremental form optimization

- checkout streamlining/reduction of checkout screens

- mobile UX refinement.

The 10x version of a friction test is usually strategic in nature: deliberately adding friction in a place where it produces ownership or qualification, while removing it from places where it produces drop-off. The net effect is fewer leads or transactions, but materially higher quality on what comes through.

Magnitude: 10% or 10x

The 10 questions give you the X-axis of a testing matrix. The Y-axis is magnitude.

Every test, in addition to the question it addresses, has a magnitude – and the magnitude determines what kind of result it can produce. A 10% test on a given question optimizes the existing answer to that question. A 10x test on the same question aims to fundamentally rewrite the answer.

These aren’t 2 ends of a continuous spectrum. They’re categorically different operations that produce categorically different outcomes.

A 10% trust test adds another review section, integrates some 3P credibility near the CTA or adjusts the placement of existing trust signals. A 10x trust test asks whether the entire trust architecture of the page is correct – whether the brand should be leading with a VSL instead of star ratings, whether the social proof should come from named experts rather than aggregated review counts, whether the trust mechanism the brand has built its credibility on is actually the one the audience is most likely to believe. The first kind produces a few percentage points of lift, sometimes. The second, when it works, results in a page that an audience segment intuitively finds trustworthy, for reasons they can’t quite articulate…which often comes with massive increases in CVR and/or AOV.

The reality is that most agencies (and most CRO experts) do two things: (1) they run virtually no 10x tests and (2) they tend to only run tests that address a few of the 10 questions.

Why?

Start with the fact that 10% tests are easier to design + easier to manage. They have lower internal political costs (you’re not asking anyone to rethink anything important). They produce statistically defensible results within reasonable timeframes. They get approved without escalation. If they fail, the cost is trivial – at worst, you’ve lost 5-10% of sales or leads for a few weeks, limited to a subset of pages/products/services. Annoying, but not catastrophic.

10x tests are more difficult across every dimension. They require strategic thinking before they can be designed. They have higher political cost (you’re asking an executive or brand team, the design team, sometimes the founder, to entertain a fundamental rethink of something they consider settled). They produce results that are harder to interpret, because the variance is wider. They typically require more approvals (especially at bigger companies). When they fail, it’s less like pop rocks + more like fireworks (aka people notice). If a 10% test failure costs $5k-$10k, a 10x test failure can cost $100k+. That’s…noticeable.

The result of these incentives, for most brands/orgs, is a testing strategy that systematically under-allocates to 10x tests. The CRO team/agency produces what they’re rewarded for producing: defensible 10% tests that win at predictable rates. The CVR or AOV or whatever ticks up by a few points here and there, but eventually flatlines as the cumulative impact of those minor, low-stakes tests can’t escape the structural limitations of the existing structure. The only way to do that is to run 10x tests, which requires a willingness to work against the incentive structure (often, at some real risk – bad 10x tests can get someone fired).

All of this got me thinking: what happens if we combine these 2 axes for evaluating tests into a single, coherent matrix? It turns out the result is both incredibly cool + wildly informative:

Classify every one of the tests you’ve run into this matrix and a pattern emerges almost instantly. The most common is heavy concentration in friction-10% and specification-10%; moderate presence in trust-10%, value-10%, urgency-10%; near-zero presence in everything labeled 10x; and most tellingly, near-zero presence in recognition, resonance, and aspiration regardless of magnitude. Every once in a while, there’s one for risk mitigation.

That pattern is almost universal. It also explains, with almost no further analysis required, why most testing programs fail to produce consistent, measurable improvement in the metrics they’re targeting: the tests are clustered in cells where the ceilings are lowest. The cells where the ceilings are highest – the 10x cells of the 5 foundational questions – are systematically ignored by the incentive structure of the program.

That’s precisely what happened to the brand I described at the beginning of this article. Their testing program was beautifully executed. The individual tests themselves were well-run, with each one being defensible as something worth testing. What was missing wasn’t testing (there was plenty of that) – it was connectivity between the individual tests and the bigger picture of what those results implied for their overall marketing.

The fix for this brand was moving them out of their p-value-obsessed testing structure and giving them the framework to see (1) where the gaps in the program were (aka the matrix above) and (2) help them transform their isolated tests into brand-wide wins.

That’s where we’ll pick up in the next article.

Part II covers the audit (how to classify your tests into the matrix without lying to yourself or looking bad), how to prioritize what to do next, how to connect the matrix to your audience insights + understanding & the practical implementation guide (what to actually do on Monday morning).

But that’s next time. For now, start thinking about which of those questions you haven’t thought about or tested – and how you might go about doing it.

Cheers,

Sam